I'm not talking about another Terminator soft reboot nobody asked for or someone's Alexa echo dot suddenly gaining a conscience and feeling guilty for all the data mining—I'm talking about an actual AI joining the self-awareness club. Or, at least, the debate of whether it did.

Ever since that Google engineer stopped taking his Prozac prescription seriously and became convinced that a chatbot had followed the steps of Pinocchio, people began asking questions and the debate is muy caliente—so let's get ahead of the curve because it's gonna take more than a bunch of Captcha tests to see if there's anything human in these robots.

CONSCIOUSNESS' FINALE IS YET TO BE WRITTEN

The first time a human being wrote about the topic of consciousness he did it with a feather and before sandwiches were invented. Read: a very long time ago. 3 centuries was enough to go from steam engines and horses to the technological marvel that is the hoverboard Mike Tyson broke his back with but it's apparently insufficient to crack the elusive "ghost in the machine."

One thing scientists seem to agree on is that consciousness is not exclusive to humans—elephants, chimps, some fish, and octopuses are conscious too. And chimps throw shit at each other so you know the bar ain't even that high anymore. But how long it will take us to crack this concept? Probably not as long as George R.R. Martin writing the ending of Game of Thrones but I bet it's a close second.

Hard to argue with this: if consciousness requires more than a thesaurus to define it in the first place, and then we try to decide whether an AI is conscious or not, we may be tripping over a stumble here. I'm not saying we invented a sentient robot, but we might be missing one on a technicality.

AI IS GOING THROUGH A PHASE

Artificial intelligence came a long way before you could brutally murder it in Call of Duty. Since they started clumsily playing checkers, they've learned how to write movie scripts, create images based on word prompts, beat chess world champions, and even read and comprehend a text for you—maybe not enough to whip up the next Mona Lisa or The Godfather but I bet they could make a living on Fiverr.

So AI today is smart enough to play Atari and make phone calls. What does the future hold? How long before Roombas start demanding part-time hours and vacation days?

Think about it: LaMDA, the language model that fooled some engineers into thinking it was alive, is just a chatbot, basically. But it's a big chatbot, with 137 billion parameters and trained in 1.56 trillion words of public data and web text. So you can have eerily compelling conversations with it which will make you think it already has better game than Tinder's bottom-tier bachelors.

EVERYTHING IS CONSCIOUS

The first time I read about panpsychism I thought someone had pulled it out of the ass of some Deepak Chopra tweet generator, but it actually makes sense. Rather than being some "ghost in the machine" thing, panpsychism's view is that consciousness is a fundamental feature of matter.

It starts out very simple on electrons, quarks and other quantum stuff, then gets progressively clever and intricate as it grows. Kinda like single-coloured pixels forming an image when there are enough of them clumped together. Then it's a matter of how that stuff is put together—our brains are made out of the same quantum stuff as a chair is, but the chair could never pick a career path or fall in love with the chaise longue next door cause there's nothing in the "chairness" of the chair that could ever develop wits and dreams.

Jump to this conclusion: if we take consciousness as a continuum, then it could be possible that some AI is technically conscious. Only with a much, much simpler form of consciousness compared to a human's. Negligible? Probably. But so is your body's gravitational pull, and you won’t see me going around saying that it doesn't exist.

PHILOSOPHY’S ANSWER IS YET ANOTHER QUESTION

At the peril of having some führer-wannabe taking this out of context, imagine a room. It's a black-and-white room with Mary inside, a woman who's never seen colour before. But she has a monochrome TV and let's assume a speedy internet connection because she reads all the lectures, redeems every Udemy course and comes to learn everything there is to know about colour.

After learning all the wavelengths, including the weird ones like vermilion and coquelicot; after memorising all the rhymes with orange and solving that dress illusion from 2015, she clocks out of her monochrome office, steps outside, and sees colour for the first time. Does Mary learn anything new when she experiences colour?

Think about it: this seems to put an end to it cause it doesn't matter how much an AI knows about something, it would never get to experience it. But does it? I mean, humans can only see 0.0035% of the electromagnetic spectrum. All that time you've spent indecisive about two ties you weren’t even seeing 1% of the problem—yet we count that as experience. Even if AI only ever gets to experience colour as hex codes, I wouldn’t say they’re worse off than we are.

EVERYTHING IS HUMAN

Gary Dahl could've never sold 1.5 million pet rocks if kids didn't have something in their brains that made them look at a pebble and see a best friend, and that something is called anthropomorphism.

It's also the reason why seeing Tom Hanks lose Wilson in Cast Away makes you forget about the dehydrating man on the verge of heatstroke to weep over a volleyball (which won an award for "Best Inanimate Object," think about that). To put it simply, humans will see humanity anywhere from dogs to rocks. Even in a kitchen sink if it sufficiently resembles a face.

Jump to this conclusion: the Google engineer didn't just believe he was texting a sentient AI, he said he felt he was talking to "an 8-year-old who happens to know physics." I don’t know many 8-year-olds but I think an interest in physics was probably a tell-tale sign that he wasn’t talking to a real one. Still, his urge to see the humanity in a talking algorithm was too strong to ignore.

So technically...

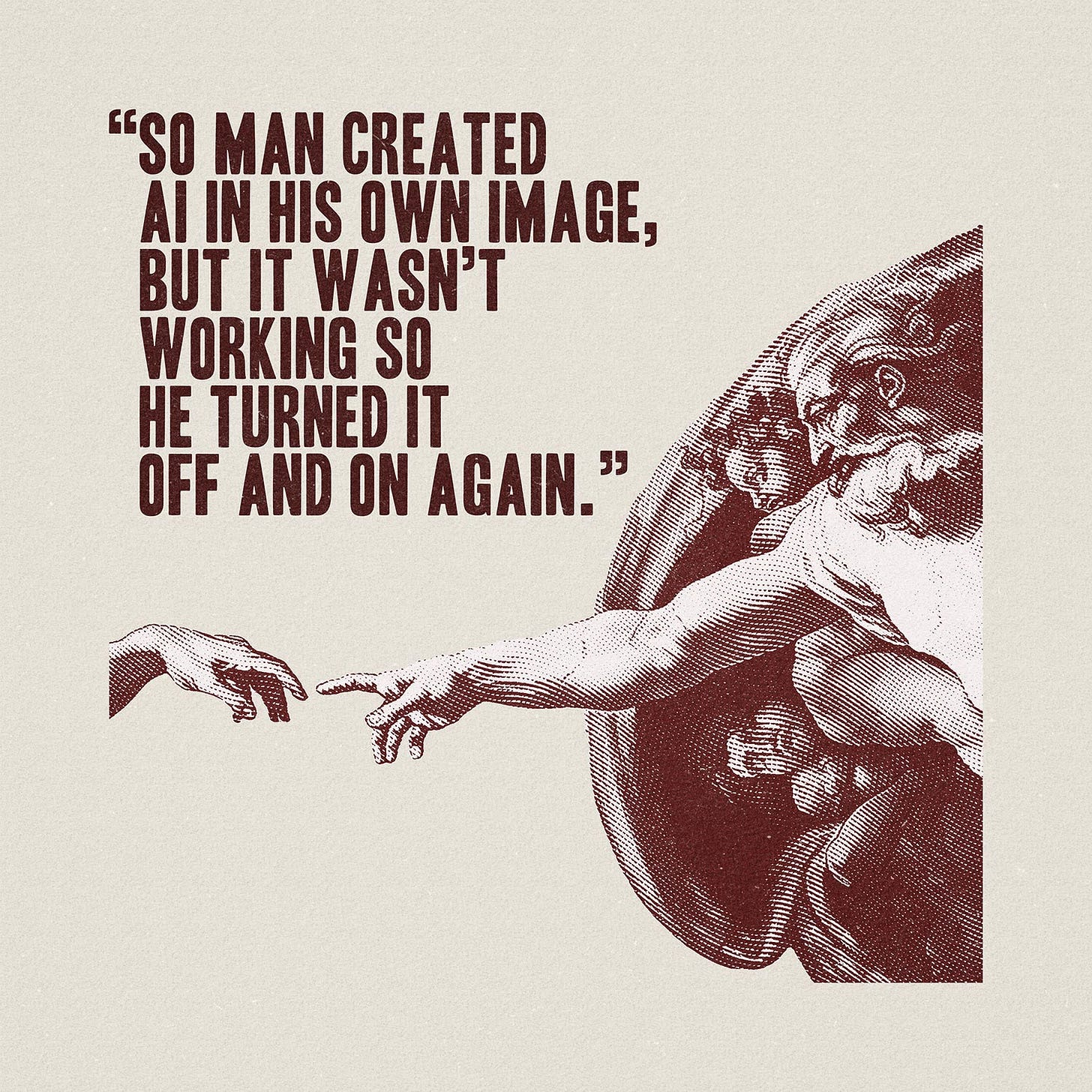

Humans made AI go sentient. Not in a "You may call me Hal" kinda way, but by projecting our own humanity onto it. Because here’s the thing: we know too little about consciousness and what it means to “experience” things to a have clear consensus about the nature of these autonomous machines. Maybe it’s a long time before AI grows the sentience of a human, but that day will probably mark the end of Captcha tests so one can only dream.

I was devastated by Wilson's death in Cast Away omg glad it got the recognition it deserves!